|

|

Drew’s Note: This week’s guest blogger had so much to say, we decided to break it up into two parts. So without further delay…Dr. Mark Klein. Again. Enjoy!

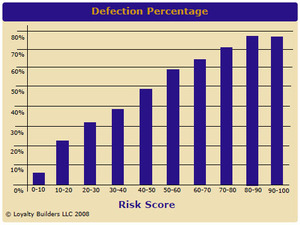

Now that we have an At-Risk score for each customer, we need a reasonable way to use it. Every customer is at risk to some degree. How do we find the threshold above which we need to take action?

One approach is based on the existing rate of defection, the [yearly] percent of customers who go inactive by not making a purchase within the time that defines an active customer.

We classify a customer as At-Risk if they have a probability of defection that is equal to or greater than the overall population defection rate. For example, let’s assume that 15% of the total customer population defects each year. Then we say a customer is At-Risk if they have a probability greater than15% of defection from the logistic regression model. This is a simple, pragmatic, and effective way to set the threshold.

It may be useful to consider a combination of At-Risk score and a customer’s lifetime value when deciding which customers warrant an aggressive win-back campaign. Working to retain a customer with low lifetime value (future revenue potential) and high probability of defection is not the best use of resources. Instead, concentrate on customers with higher lifetime values and more tractable At-Risk scores.

Measuring the accuracy of At-Risk predictions

It is important to regularly assess the accuracy of the predictions, and to update the analysis when the predictions have shown a significant decrease in accuracy. How fast conditions change and how much accuracy the model loses is a function of the nature of the business and the rate of change of the indicator variables, but there are many situations where a model loses its accuracy within a few months. Model accuracy needs to be spot-checked on a quarterly basis at a minimum, and if it is financially viable, monthly assessments are recommended.

The best way to measure model accuracy is with a correlation coefficient that distinguishes between true positives, true negatives, false positives, and false negatives. A false negative, for example, is a customer for whom the model predicted defection but the customer in fact made a purchase. The true negatives are the customers of interest: the model predicts defection and indeed the customer did defect. We regularly see results where true negatives are accurately identified 80% of the time.

But of course to make these measurements, a business must be willing to not reach out to at least some at-risk customers in order to measure the accuracy of the model. It’s hard for many companies to resist touching some of these potential defectors just to measure model accuracy. The best approach is to use an automated system to regularly identify at-risk customers, with less frequent but still periodic checks using control groups.

Given the worth of a customer and the high cost of acquiring new ones, finding at-risk customers is a mission-critical task. This brief overview is just an outline of the process; to learn more, see the author’s free eBook, Field Guide to Mathematical Marketing.

Feel like you jumped into the middle of the conversation? Maybe you missed part one of this post.

Dr. Mark Klein is is CEO of Loyalty Builders LLC, the developers of Longbow, a web-based direct marketing system that predicts the future buying behavior of existing customers. He blogs frequently on Mathematical Marketing and recently published his first novel.

Every Friday is "grab the mic" day. Want to grab the mic and be a guest blogger on Drew’s Marketing Minute? Shoot me an e-mail.